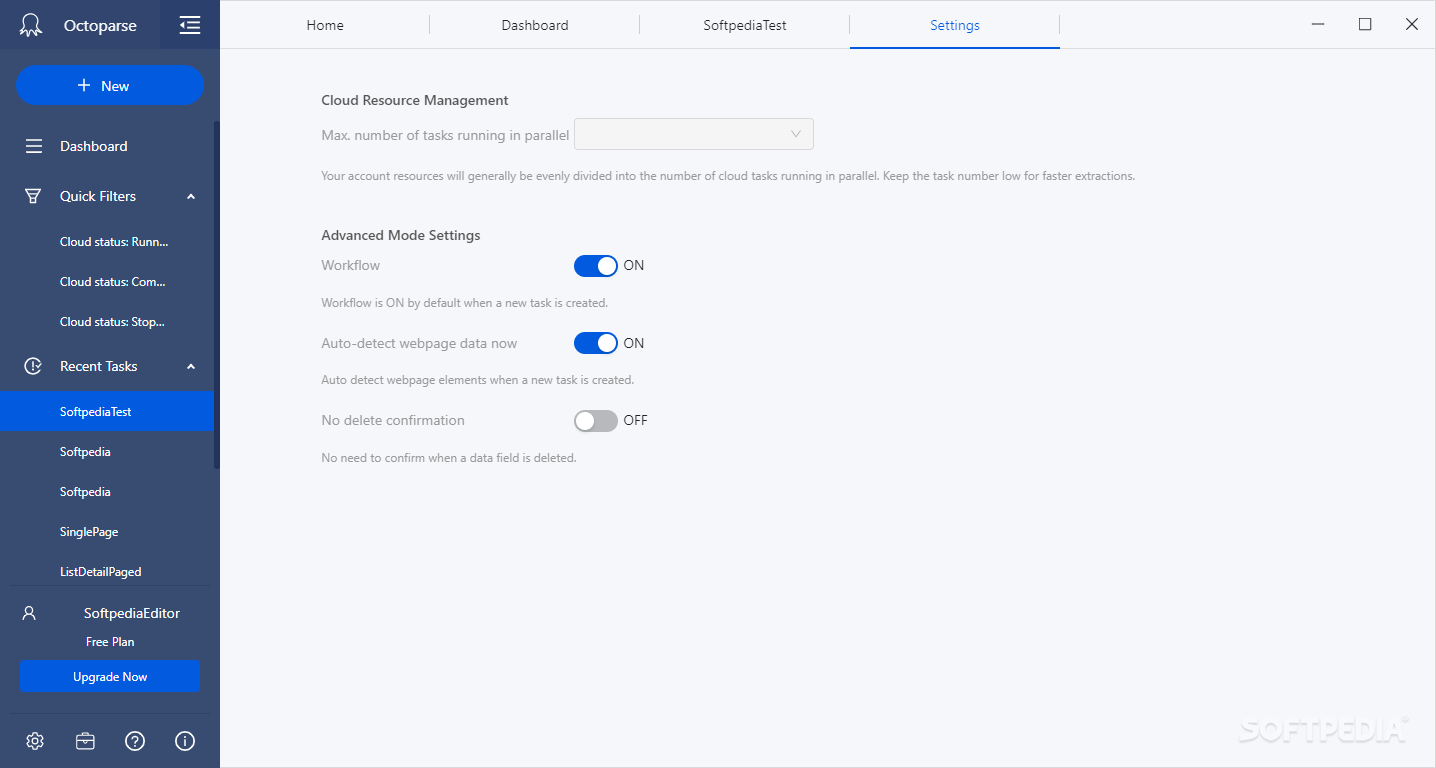

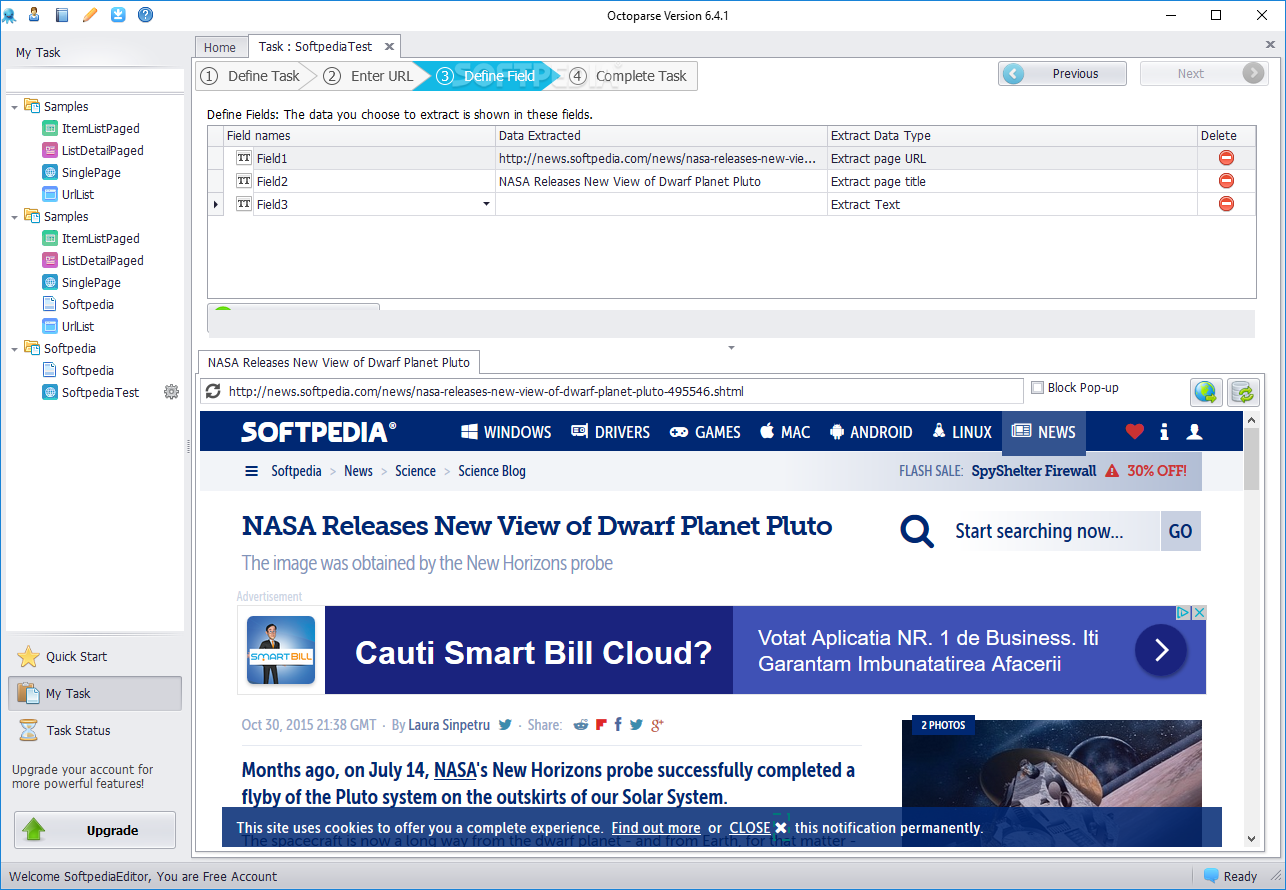

After you upload your configuration project to the cloud, you can choose to perform the extraction concurrently by using many cloud servers. Scraping the web on a large scale simultaneously, based on distributed computing, is the most powerful feature of Octoparse. Just click the information on the website in the built-in browser and perform the extraction, you will get the structured data you need. Octoparse simulates human web browsing behavior like opening a web page, logging into an account, entering a text, pointing-and-clicking the web element. Octoparse provides a visual operation pane, which is very user friendly and straightforward. You can run your extraction project either on your own machines (Local Extraction) or in the cloud (Cloud Extraction). Its remarkable features such as filling out forms, entering a search term into the textbox, would make it much easier to extract web data. Octoparse simulates human operation to interact with web pages. There are various export formats of your choice like CSV, EXCEL, HTML, TXT, and databases (MySQL, SQL Server, and Oracle). provides high speed data collection, performing up to 10 concurrent threads.īeing a Windows application, Octoparse works well for static and dynamic websites, including those whose web pages are using Ajax. The extraction rule would tell Octoparse: which website is to be open where is the data you plan to crawl. Crawlers run in Octoparse are determined by the extraction rules configured. It's an easy-to-use web scraping tools that collects data from the web. If you have any question or idea, feel free to contact us at or join in our Facebook group: Octoparse Community.Octoparse is a free client-side Windows web scraping software that turns unstructured or semi-structured data from websites into structured data sets, no coding necessary. Click download button and all the images would be downloaded to your computer. Enter your download links.Ĭopy all the URLs of images and paste them in the textbox. Then use a chrome extension Tab Save to download the images. Export the result to excel file or other database formats and save it to your computer. The URLs of all the image will be extracted in this field. And choose Local Extraction to run the task on the computer. Now we’re done configuring the rule of extraction. Next, drag the second “Loop Item” before “Click to paginate” action. (And the image address should be in the image tag.) Then select “Extract image address of this term”. Make sure you locate the appropriate tag. Now you can start to extract the URLs of images. Wait until the page loaded, scroll down the page to the bottom. If you want all the URLs of images of this category, you need to configure pagination for extraction. **For an updated version of this article, please check this post.įirst, open the target web page in the built-in browser. You can scrape the URLs first, and then bulk download images by using a “download from URL” tool. But you can extract images as the URL of where the image is stored on the website. Unfortunately, you can’t use Octoparse to extract the image itself.

Scraping images seems to be a popular request. In this tutorial, you will learn how to extract images from websites using Octoparse.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed